It’s astounding the amount of buzzwords the tech bros will use every time they try to sell us some new AI bullshit.

What are the researchers trying to sell here?

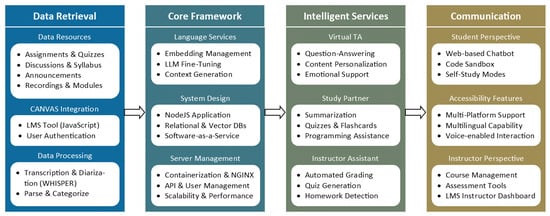

Did u read the abstract? They’re presenting a framework to use LLMs as educators in higher education. It’s quite cool really.

The idea is to create a professor personalised for every student and their learning patterns. Imagine hiring a professor to personally teach you something. The professor talks to you, creates little tests specifically for what they think are your weaknesses are in the subject matter and so on.

This is very good for us as human researchers will now be able to dedicate (assuming this tech works) 100% of their time to research instead of teaching the same subject matter again and again every single year, coming up with tests, marking tests, grading their students’ papers and so on.

If this tech is successful, then education costs would nosedive like crazy, which would be good for all.

Basically Duolingo on steroids

Does Duolingo come up with personalised tests n stuff? Never used it much haha

It adapts to your mistakes and such, often offers “personalised practice” based on your weak spots, and gives gameficated language learning experience

Dayum, that’s nice! Maybe I’ll use it if I ever want to learn a new language

Or AIEIAPALHE for short. Basically just yodelling into the void.

AIEIAPALHE

They should have stayed with ELIZA.

I’m confident we should start seeing stuff like this soon. The upcoming ChatGPT model (forgot the version name) is doing recursive prompts for itself, where it breaks down a big task into smaller ones, then runs and reruns those tasks before coming up with an output.

Right now, all LLMs just do a single pass and spit out the output. They don’t reason with themselves like we do yet. It’s kinda like what we do in quiz competitions or something, where we immediately shout “blue” when asked “what color is the sky”. However, when asked something more complicated, we don’t just answer quickly based on intuition, do we? We pause, think, rethink, look for counter arguments, patch holes in our statements and so on.

This is the ability that LLMs lack now. However, very soon they will be able to do that. This just opens up a crazy amount of things that they can do. Take code for example. Right now, LLMs just spit out code without seeing if it works or not. Now, they’ll be able to run it themselves, look for errors, bugs and so on, fix them and finally submit the output code.

Stuff like this would supercharge them quite a lot. Now, I know that I’m going to be downvoted to hell for talking about AI, cuz lemmy hates it. Mark my words though - you’ll be able to do A LOT using LLMs because of this.

AI is going to exist and improve like crazy no matter what. The leftist position on this should be public ownership over these models and NOT pretending that they don’t exist.

deleted by creator